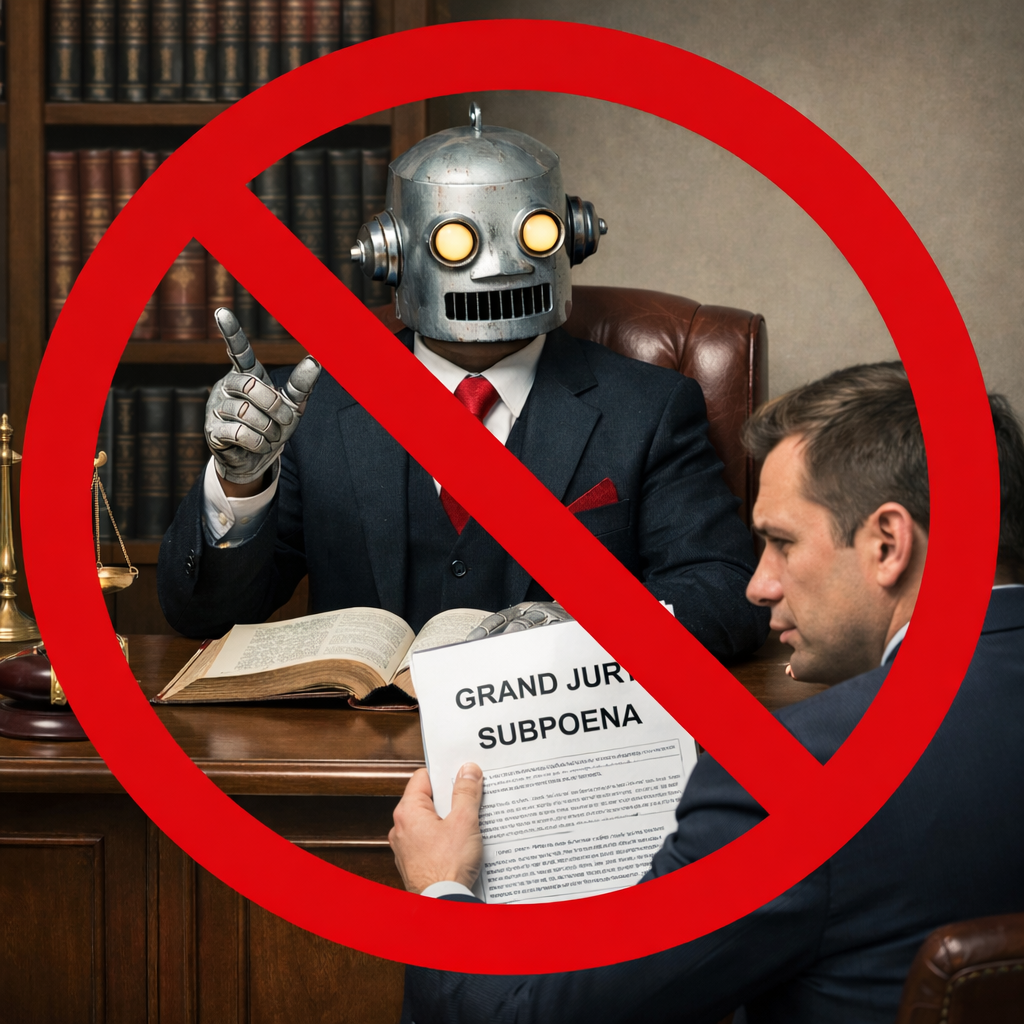

The Normal Rules Apply – Even smart computers aren’t your lawyer

There is a recurring thing that seems to happen during technological revolutions. The tools seem unprecedented, and the scale seems transformative. The language is new: algorithms, neural networks, generative models, and data training. So, if the technology is new, the old rules of law must not apply. Right?

Wrong. The law has encountered steam power, telegraphs, radio, airplanes, mainframes, laptops, and the internet. Each arrival was transformative. Each prompted a feeling that old law couldn’t apply. But more often than not the old rules were waiting. To be sure Congress often changes the law to account for new technology. But courts generally don’t change existing law just because technology changes.

The law is still there

We have seen this in antitrust law. When digital platforms started to dominate modern commerce, the feeling seemed to be that statutes written in the age of railroads could not govern computer networks. Yet U.S. v. Microsoft Corp., 253 F.3d 34 (D.C. Cir. 2001), applied established Sherman Act principles to software markets.

We saw this again in the early wave of artificial-intelligence copyright litigation. When AI systems began producing creative works, it was suggested that the Copyright Act didn’t contain the answer. Yet, in Thaler v. Perlmutter, 130 F.4th 1039 (D.C. Cir. 2025), the D.C. Circuit applied the statute as written. Copyright requires human authorship. A machine, however sophisticated, is not a human author.

In Bartz v. Anthropic, No. 3:24-cv-05417-WHA, Dkt. 231 (N.D. Cal. June 23, 2025), the same approach was used for AI. The court did not treat generative AI companies as a category beyond the Copyright Act. It analyzed the claims through familiar infringement principles. Using books to train AI was transformative and therefore likely not an infringement. Copying the books in the first place was impermissible copying. “Pirating” in the words of the court. That violated the Copyright Act. The last time I looked, the proposed settlement was reported at over $1.5 billion.

Privilege meets AI

And now AI has met privilege law. It was not a happy meeting for AI.

In United States v. Heppner, No. 1:25-cr-00503-JSR (Feb 17, 2026), the court addressed whether a defendant’s exchanges with a generative AI platform were protected by attorney-client privilege or by the work-product doctrine. The defendant, facing federal fraud charges, had used the AI system “Claude” to generate written analyses after receiving a grand jury subpoena. He later asserted that those materials were privileged because he intended to use them in preparing his defense and to share them with counsel.

The court’s reasoning was not really surprising. Attorney-client privilege protects confidential communications between a client and a lawyer for the purpose of obtaining legal advice.

Because Claude was not a lawyer, the communication was not between attorney and client. But that is what attorney client privilege protects.

Confidentiality was another problem. The platform’s privacy policy permitted collection and potential disclosure of user inputs and outputs. A communication voluntarily transmitted to a third party—particularly one that reserves rights to use or disclose the information—does not possess the confidentiality that privilege requires.

The purpose also mattered. The AI platform itself, wisely, says it does not dispense legal advice and recommended consulting a qualified attorney. Whatever assistance the tool provided, it was not the rendering of legal counsel within the meaning of the doctrine.

Work-product fared no better. That doctrine protects materials prepared by or at the direction of counsel in anticipation of litigation. Here, the defendant generated the materials himself. Counsel did not direct the AI searches, and the documents were not prepared by counsel or counsel’s agent. The elements were not satisfied simply because litigation was foreseeable.

Judge Rakoff concluded that generative AI may represent a new frontier, but its novelty does not displace longstanding legal principles. So, on privilege the score now stands at Law - 1, AI - 0.

And so. . .

Artificial intelligence will undoubtedly reshape legal practice. It will accelerate research, draft memoranda, and assist in analysis. (It may also, as we now know, make plenty of mistakes along the way). It may even influence how arguments are framed and tested. But it does not convert software into counsel or disclosure into confidentiality.

In short, new tools often create new issues. But they rarely create new law until the legislature changes the law. The old rules are still there.